Democratizing AI governance through public participation

We help communities, civil society, and public institutions test, understand, and govern AI systems in real-world contexts.

EXPLORE OUR APPROACH

AI systems increasingly shape access to services, information, and rights

Yet the people most affected are often excluded from how risks are defined, tested, and addressed, especially across Global Majority contexts where social, political, and institutional realities differ from the settings most evaluation methods are designed for.

We translate participation into action by:

Building critical AI literacy so communities and civil society can engage with AI systems on equal footing

Bringing community perspectives into evaluation and oversight

Turning findings into safeguards, decisions, and accountability pathways

Surfacing real-world harms that don't show up in internal testing

OUR APPROACH

We partner with public institutions and civil society to understand real needs and evaluate AI systems in the contexts where they are deployed, especially in public-facing services.

Critical

AI literacy

Workshops and curricula that prepare communities and practitioners to engage meaningfully and critically with AI systems and emerging technologies.

Community-led

AI red-teaming

Structured exercises that test systems against realistic scenarios, local contexts, and lived experience, powered by our purpose-built platform for red-teaming workflows and evidence capture.

Deliberation + accountability loop

Participatory forums that translate findings into choices, safeguards, governance commitments, and monitoring.

Partner with us

For Civil Society & Communities

Participate in processes that shape how AI systems work in your context. Build power through critical AI literacy.

For Public Institutions & Governments

Evaluate AI systems before launch or audit in production. Build participatory oversight that reflects the communities you serve.

For Funders & Foundations

Support participatory AI governance with measurable, traceable impact. Fund interventions grounded in community needs.

Co-design a participatory AI literacy, red-teaming, or deliberation engagement with SOMOS Civic Lab, tailored to your context and grounded in local community perspectives.

SUPPORTED BY

Ethics & Tech

Ethics & TechPractitioner Fellowship

AI Accountability CoLab

AI Accountability CoLab

AI Trust & Safety

AI Trust & SafetyRe-Imagination Programme

MEET THE TEAM

We build with communities and partners to translate lived experience into accountability

We're a team of researchers and practitioners working at the intersection of AI governance, public participation, and real-world evaluation.

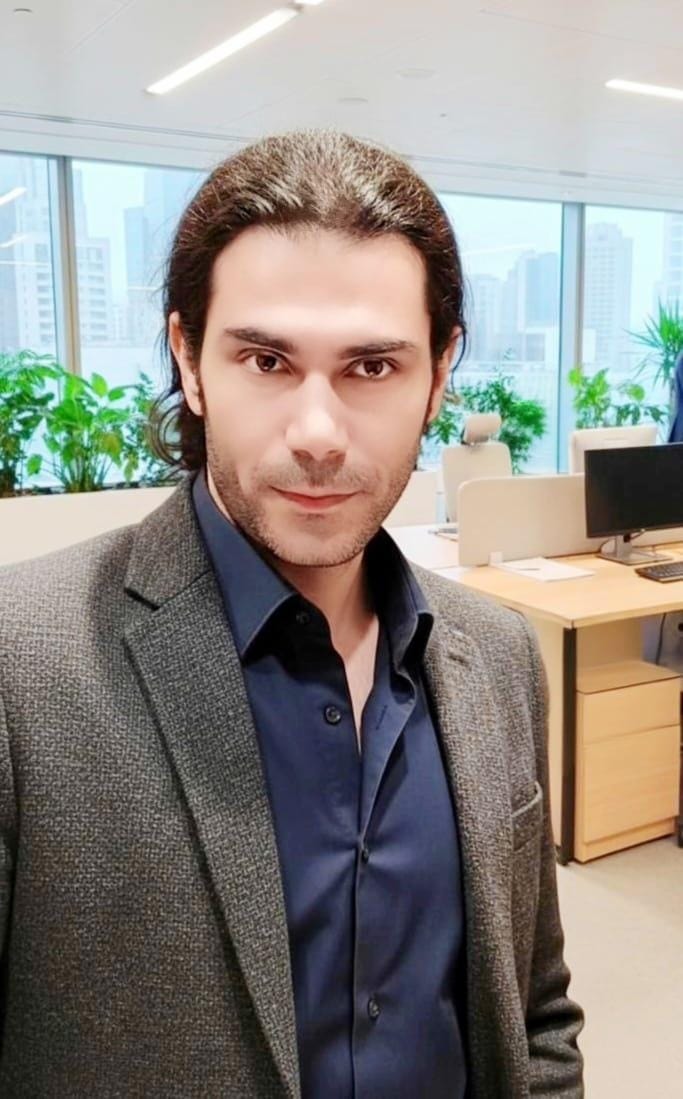

JP Gomes

Carlos Centeno